Data Transformation Complexity Balance | Estateplanning

The balance between data transformation complexity is a critical aspect of data management, as it directly impacts the efficiency, accuracy, and scalability of

Overview

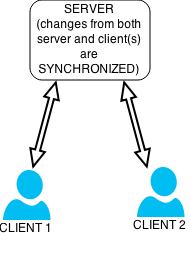

The balance between data transformation complexity is a critical aspect of data management, as it directly impacts the efficiency, accuracy, and scalability of data processing pipelines. Data transformation, which involves converting data from one format to another, can range from simple to complex, depending on the nature of the data and the requirements of the target system. A balance must be struck between the level of complexity in data transformation and the need for data consistency, integrity, and reliability. This balance is crucial in various applications, including data synchronization, data integration, and data warehousing, where [[data-warehousing|data warehousing]] and [[business-intelligence|business intelligence]] rely on [[etl-tools|ETL tools]] to transform and load data into centralized repositories. As data volumes and varieties continue to grow, the importance of achieving this balance will only increase, with [[gartner|Gartner]] predicting that by 2025, 80% of organizations will have implemented [[data-fabric|data fabric]] architectures to manage their data assets. Effective data transformation complexity balance enables organizations to unlock insights from their data, improve decision-making, and drive business outcomes, as seen in the success stories of [[netflix|Netflix]] and [[airbnb|Airbnb]], which have leveraged [[apache-beam|Apache Beam]] and [[apache-spark|Apache Spark]] to streamline their data processing workflows.