Bidirectional Encoder Representations from Transformers

Bidirectional Encoder Representations from Transformers, commonly referred to as BERT, is a pre-trained language model developed by Google that has achieved sta

Overview

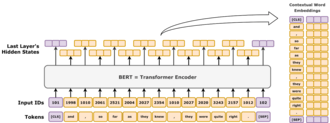

Bidirectional Encoder Representations from Transformers, commonly referred to as BERT, is a pre-trained language model developed by Google that has achieved state-of-the-art results in a wide range of natural language processing tasks. BERT was introduced by Jacob Devlin and his team at Google in 2018 and has since become a widely-used tool in the field of artificial intelligence. BERT's success can be attributed to its ability to learn contextual relationships between words in a sentence, allowing it to better understand the nuances of human language. This is made possible by the use of transformer models, which were introduced by researchers at Google, including Ashish Vaswani and Noam Shazeer, and have been further developed by companies like Facebook and Microsoft.